ibm-event-streams

Backup EventStreams Using Camel S3 Connector

Objective

Make a backup of a source Event Streams. The backup should include all topics data (including Schema Registry). Backed up data will be stored in IBM Cloud Object Storage (COS). Consumer lags are not backed up.

Pre-requisite

- Should have a backup Kafka Cluster - preferably in same version as source.

- KafkaConnect Cluster not needed.

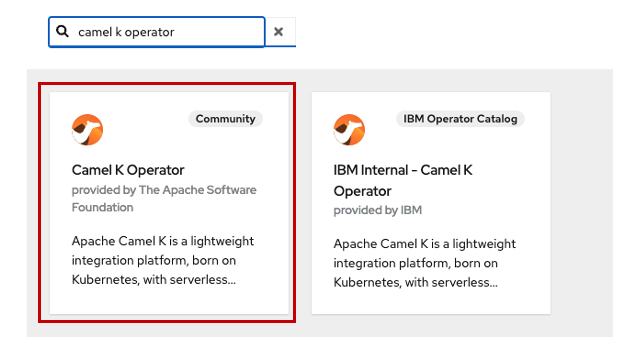

- Camel-K Community Operator installed

- Following information about a IBM COS. Refer here on how to create buckets and get details of a ICOS.

Access-Key.

Secret.

Bucket Name.

Public Endpoint of IBM COS:

Setup Camel-K

To be done in Both (Source and Target) EventStreams Namespace

-

Install the Camel-K Community Operator from Operator Hub (if not done yet). You can install it in the same namespace as EventStreams.

.

.

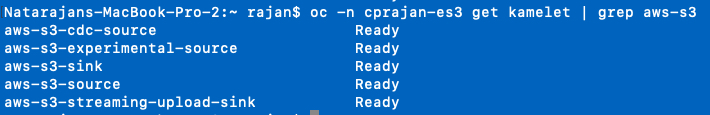

Once installed, you should be able to see a list of installed Kamelets.oc -n <NAMESPACE> get Kamelet -

In the list displayed, make sure the following 2 Kamelets are listed as we will be using them.

aws-s3-sink.

asw-s3-source

To be done in the Source Namespace

This is the step to backup the contents to Object Storage.

-

Create a s3-sink KamletBinding. You can use the sample KamletBinding yaml file provided here. Create the KamletBinding.

oc -n <NAME-SPACE> apply -f <yaml file>

The main parameters that must be checked and changed are:

bootstrapServers, topic and the connection parameters. For testing purposes, you can use a PLAINTEXT connection.

S3 connection details: bucketNameOrArn, accessKey, secretKey, overrideEndpoint (MUST be set to true), uriEndpointOverride. -

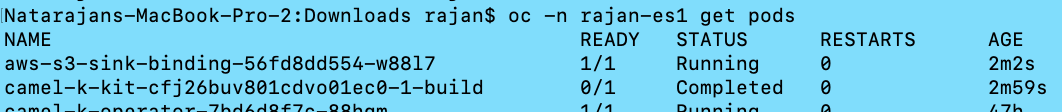

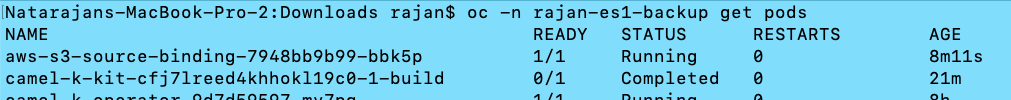

The process will take some time (about 5 minutes) before you see some new pods. If it’s successful, you should see at least 2 new pods.

One build pod in completed state.

One sink-binding pod in running state.

.

.

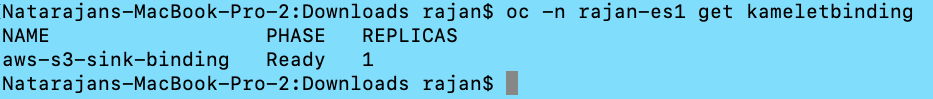

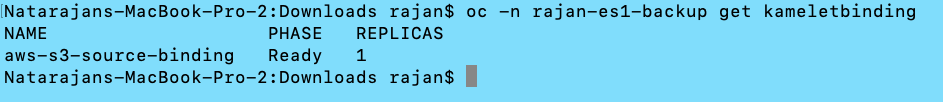

You can also check the status of the KameletBinding.

oc -n <NAMESPACE> get kameletbinding.

.

.

Next test producing messages to the topic and check the ICOS bucket.

To be done in the Target Namespace

This is the step to restore the contents from Object Storage to Kafka Topic.

-

Create a s3-source KamletBinding. You can use the sample KamletBinding yaml file provided here. Create the KamletBinding.

oc -n <NAME-SPACE> apply -f <yaml file>

The main parameters that must be checked and changed are:

bootstrapServers, topic and the connection parameters. For testing purposes, you can use a PLAINTEXT connection.

S3 connection details: bucketNameOrArn, accessKey, secretKey, overrideEndpoint (MUST be set to true), uriEndpointOverride. -

The process will take some time (about 5 minutes) before you see some new pods. If it’s successful, you should see at least 2 new pods.

One build pod in completed state.

One sink-binding pod in running state.

.

.

You can also check the status of the KameletBinding.

oc -n <NAMESPACE> get kameletbinding.

.

.

You should be able to see that the topic is being created and getting populated with messages from ICOS.